How much high-bandwidth memory (HBM) is enough? For Meta, the answer is clearly around 0.5 TB, which is also the HBM capacity planned for one of its new AI accelerators announced today.

Meta Inc. announced new products

Meta, the company behind Facebook and Instagram, today announced four new Meta Training and Inference Accelerator (MTIA) chips. These proprietary chips, developed in partnership with Broadcom, are designed to handle a range of computationally intensive tasks for the social media giant, including ranking and recommendation (R&R) training and inference workloads, as well as training underlying AI models and running them in inference mode.

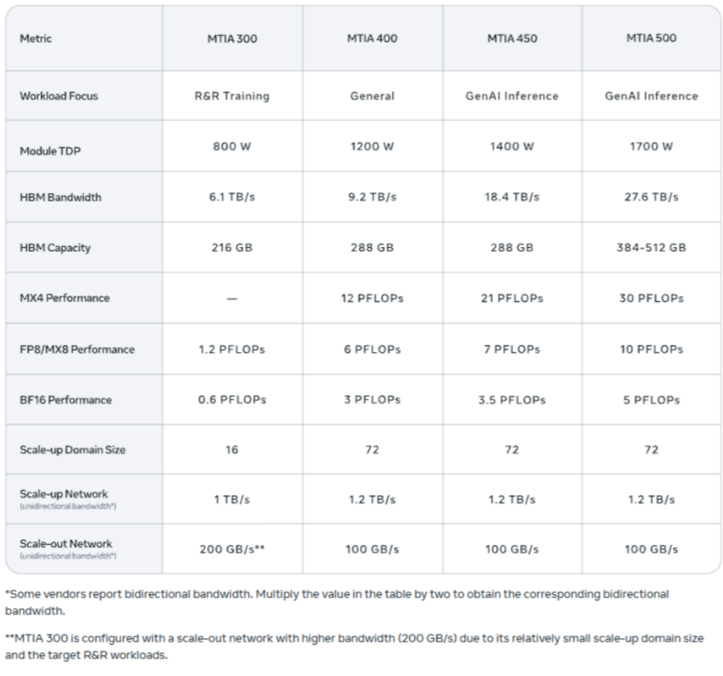

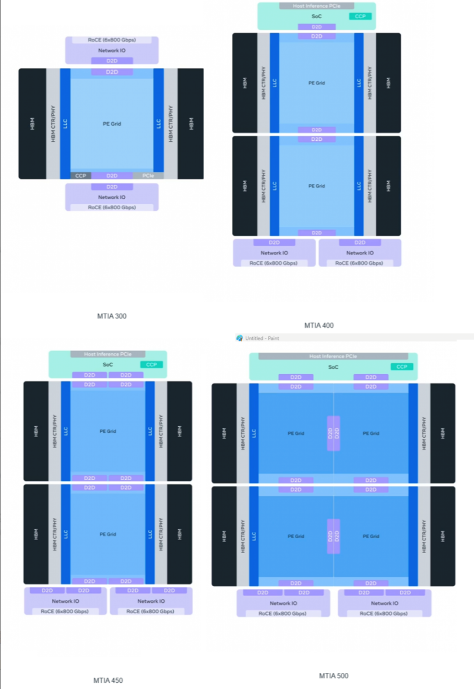

Each chip is designed to accelerate a specific task. For example, the new MTIA 300 includes two RISC-V cores and multiple dedicated processing units (PEs) assembled using a chipset design, specifically designed for R&R training. The MTIA 400, based on the MTIA 300, is geared towards general-purpose Meta workloads. The MTIA 450 and MTIA 500 are upgrades to the MTIA 300, introducing new chipset configurations, more processing units, and support for new data types, designed to handle the largest and most complex AI workloads.

Meta places particular emphasis on improving data transfer speeds between memory and the processor, which is often the bottleneck for GenAI workloads. The MTIA features 288GB HBM with an HBM bandwidth of 9.2TB/s; the MTIA 450, also with 288GB HBM, doubles the memory bandwidth to 18.4TB/s; and the MTIA 500 features 384GB to 512GB of HBM with an astonishing memory bandwidth of 27.6TB/s.

The MTIA 500 chip, planned for deployment in metadata centers in 2027, will achieve an MX4 (i.e., MXFP4, or miniaturized 4-bit floating-point) inference performance of 30 petaflops, compared to 21 petaflops for the MTIA 450 chip. Furthermore, the MTIA 500 has a thermal design power (TDP) of 1700 watts, while the MTIA 450 and MTIA 400 have TDPs of 1400 watts and 1200 watts, respectively.

Compare to Rubin GPU

These figures are comparable to Nvidia's upcoming Rubin GPU. Rubin will offer 22 TB/s of HBM4 bandwidth, 5 TB/s less than Meta's claimed bandwidth for its MTIA 500. In terms of performance, Nvidia states that Rubin will provide 35 petaflops of NVP4 training capability and 50 petaflops of NVP4 inference capability. NVFP4 is a new low-precision data type that Nvidia introduced last year for its Blackwell architecture, reportedly offering higher precision and lower quantization errors, but at the cost of higher complexity and lower compression ratios.

Meta states that the MTIA 400 is its first in-house developed chip, designed to compete with the fastest AI accelerators on the market. In a blog post published today, the company wrote, "It combines two computing chips, doubling the computing density, and also supports enhanced versions of MX8 and MX4, both low-precision formats crucial for efficient GenAI inference. A single rack contains 72 MTIA 400 devices connected via a switching backplane to form a single extended domain."

The company stated that the MTIA 450 increases memory bandwidth, increases MX4 capacity by 75%, adds hardware acceleration for attention mechanisms and feedforward network (FFN) computations, and effectively supports hybrid low-precision computing.

In addition to offering higher raw HBM and memory bandwidth, the MTIA 500 incorporates several design innovations. For example, in the MTIA 500, Meta will use a 2×2 configuration, where the smaller compute chipset is "surrounded by multiple HBM stacks, two network chipsets, and a SoC chipset providing PCIe connectivity to the host CPU and horizontally expandable network cards."

The MTIA 400, 450, and 500 all use the same chassis, rack, and networking infrastructure, making chip upgrades extremely convenient. "We designed the accelerator architecture as a chipset system—these independent, reusable building blocks for compute, I/O, and networking," Meta writes. "Because each chipset can be upgraded individually, we can complete improvements in months rather than years. Furthermore, different chipsets can be manufactured on different process nodes, thus minimizing costs while meeting performance and power requirements."

While Meta has partnered with Broadcom to create its own custom chips, it is also one of NVIDIA's largest customers, having purchased millions of NVIDIA GPUs over the years, including Grace, Blackwell, and the upcoming Rubin GPU.

Source: Compiled from hpcwire